This content is available to HEI Network subscribers only.

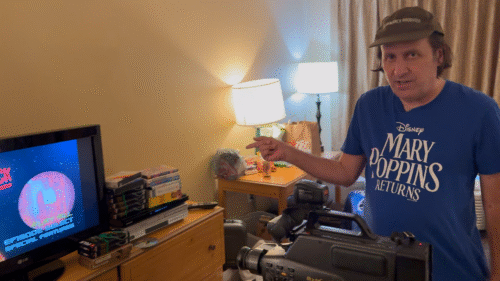

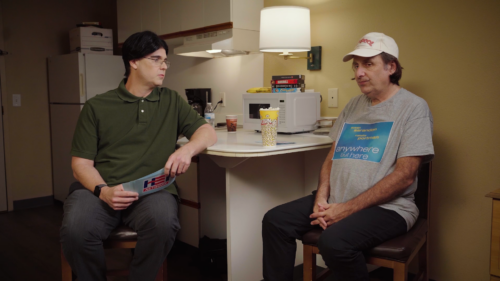

1201 ‘Mass’ & ‘No Time To Die’

Air Date: 10/06/2021

View

Credits

Written By

Tim Heidecker

Gregg Turkington

Eric Notarnicola

Andrew Porter

Directed and Cut By

Vera Drew

Executive Producers

Tim Heidecker

Great Turkington

Dave Kneebone

Eric Notarnicola

Andrew Porter

Co-Executive Producer

Janel Kranking

Producer

Kate McGraw

1st Assistant Director

Joe Bohn

2nd Assistant Director

Jeff Pallotta

Director of Photography

Terry Zumalt

Production Designer

Jillian Oliver

Costume Designer

Abigail Keever

Camera Operators

Brian Sowell

Zack Campbell

Camera Assistants

Devin Keebler

Josh Greytak

Art Director

Carson Giles

Set Dressers

Joe Altamoro

Sam Rogich

Prop Master

Rachel Ra

Graphic Artist

Tak Boroyan

Sound Mixer

John Maynard

Boom Operator

Dan Kloch

Hair and Makeup Department Head

Amber Mari

Grips

Dennis Pires

Pauli Dandino

Electricians

Ardy Fatehi

Wrecks Brixton

Assistant Editor and 3D Animator

Dan Cupps

Production Coordinator

Shannon Cloud

Office Producttion Assistant

Anna Christinan

Set Production Assistants

Pierre Hamlin

Tyler Gillentine

COVID Compliance Officer

Jessica Mathis

Production Accountants

Nathan Wakefield

Jason Tejada

Executive Producer for HEI Network

Justin Gaynor

Playlist

Playlist

1

101 ‘The Man With The Iron Fists’ and ‘Flight’

2

102 ‘Skyfall’ and ‘Lincoln’

3

103 ‘Twilight: Part Two’ and ‘Anna Karenina’

4

104 ‘Red Dawn’ and ‘Life of Pi’

5

105 ‘The Frozen Ground’ and ‘Universal Soldier: Day of Reckoning’

6

106 ‘Playing for Keeps’ and ‘Hyde Park on Hudson’

7

107 ‘The Hobbit’ and ‘Les Miserables’

8

108 ‘Zero Dark Thirty’ and ‘Monsters, Inc. 3D’

9

109 ‘Jack Reacher’ and ‘Cirque du Soleil: Worlds Away’

10

110 ‘Parental Guidance’ and ‘Django Unchained’

11

201 ‘Side Effects’ and ‘Identity Thief’

12

202 ‘A Good Day to Die Hard’ and ‘Escape From Planet Earth’

13

203 ‘Snitch’ and ‘Dark Skies’

14

1st Annual On Cinema Oscar Special

15

204 ’21 and Over’ and ‘Jack The Giant Slayer’

16

205 ‘Oz: The Great and Powerful’ and ‘Dead Man Down’

17

206 ‘Carrie’ and ‘The Incredible Burt Wonderstone’

18

207 ‘The Croods’

19

208 ‘G.I. Joe: Retaliation’ and ‘Temptation: Confessions of a Marriage Counselor’

20

209 ‘The Company You keep’ and ‘Jurassic Park 3D’

21

A Tribute to Steven Spielberg in 3D

22

210 ‘Oblivion’ and ‘Scary Movie 5’

23

The Future of Cinema

24

301 ‘The Lone Ranger’ and ‘Despicable Me 2’

25

302 ‘Grown Ups 2’ and ‘Pacific Rim’

26

303 ‘Turbo’ and ‘Red 2’

27

304 ‘The Wolverine’ and ‘Blue Jasmine’

28

305 ‘The Smurfs 2’ and ‘300: Rise of an Empire’

29

306 ‘Percy Jackson: Sea of Monsters’ and ‘Elysium’

30

307 ‘Kick Ass 2’

31

308 ‘The World’s End’ and ‘The Colony’

32

309 ‘One Direction: This is Us’ and ‘The Getaway’

33

310 ‘Riddick’

34

311 On Cinema Christmas Special

35

401 ‘Lone Survivor’ and ‘Her’

36

402 ‘The Nut Job’ and ‘Ride Along’

37

403 ‘I, Frankenstein’ and ‘Gimme Shelter’

38

404 On Alternative Medicine

39

405 ‘The Lego Movie’ and ‘Robocop’

40

406 ‘The Girl on a Bicycle’ and ‘Endless Love’

41

407 ‘Pompeii’

42

408 ‘Welcome to Yesterday’ (aka ‘Almanac’) and ‘Non-Stop’

43

2nd Annual On Cinema Oscar Special

44

409 ‘Need for Speed’ and ‘Walk of Shame’

45

410 ‘Muppets Most Wanted’

46

501 ‘Deliver Us From Evil’ and ‘Tammy’

47

502 ‘Dawn of the Planet of the Apes’ and ‘So It Goes’

48

503 ‘Jupiter Ascending’ and ‘Planes: Fire and Rescue’

49

Decker – Classified – Episode 1

50

504 ‘Wish I Was Here’ and ‘Hercules’

51

Decker – Classified – Episode 2

52

505 ‘Guardians of the Galaxy’ and ‘Get On Up’

53

Decker – Classified – Episode 3

54

506 ‘Teenage Mutant Ninja Turtles’ and ‘Into The Storm’

55

Decker – Classified – Episode 4

56

507 ‘Let’s Be Cops’ and ‘The Expendables 3’

57

On Cinema After Cinema

58

Decker – Classified – Episode 5

59

508 ‘Sin City: A Dame to Kill For’

60

509 ‘The November Man’ and ‘Jessabelle’

61

510 ‘Dark Places’ and ‘The Green Inferno’

62

601 ‘Jupiter Ascending’ and ‘The Spongebob Movie: Sponge Out of Water’

63

602 ‘Fifty Shades of Grey’ and ‘Kingsman: The Secret Service’

64

603 ‘Hot Tub Time Machine 2’ and ‘McFarlands USA’

65

3rd Annual On Cinema Oscar Special

66

604 ‘Focus’ and ‘Lazarus’

67

605 ‘Chappie’ and ‘The Second Best Exotic Marigold Hotel’

68

201 Port Of Call: Hawaii – Episode 1

69

Port Of Call: Hawaii – Episode 2

70

Our Values Are Under Attack

71

606 ‘In the Heart of the Sea’ and ‘Cinderella’

72

Port Of Call: Hawaii – Episode 3

73

Port Of Call: Hawaii – Episode 4

74

Port Of Call: Hawaii – Episode 5

75

Port Of Call: Hawaii – Episode 6

76

207 Port Of Call: Hawaii – Episode 7

77

607 ‘The Divergent Series: Insurgent’ and ‘The Gunman’

78

208 Port Of Call: Hawaii – Episode 8

79

209 Port Of Call: Hawaii – Episode 9

80

210 Port Of Call: Hawaii – Episode 10

81

211 Port Of Call: Hawaii – Episode 11

82

Port Of Call: Hawaii – Episode 12

83

608 ‘Get Hard’ and ‘Home’

84

Port Of Call: Hawaii – Episode 13

85

Port Of Call: Hawaii – Episode 14

86

Port Of Call: Hawaii – Episode 15

87

Port Of Call: Hawaii – Episode 16

88

Port Of Call: Hawaii – Episode 17

89

609 ‘Furious 7’ and ‘Woman In Gold’

90

Port Of Call: Hawaii – Episode 18

91

Port Of Call: Hawaii – Episode 19

92

Port Of Call: Hawaii – Episode 20

93

610 ‘Ex Machina’ and ‘The Moon and the Sun’

94

701 ‘Ant Man’ and ‘Fantastic Four’

95

702 ‘Black Mass’ and ‘Maze Runner: The Scorch Trials’

96

703 ‘The Intern’ and ‘Hotel Transylvania 2’

97

704 Doctor San Forgiveness Special

98

705 ‘Pan’ and ‘Steve Jobs’

99

Decker vs Dracula – Episode 1

100

Decker vs Dracula – Episode 2

101

706 ‘Goosebumps’ and ‘Bridge of Spies’

102

Decker vs Dracula – Episode 3

103

Decker vs Dracula – Episode 4

104

707 ‘Jem and the Holograms’ and ‘Paranormal Activity: The Ghost Dimensions’

105

708 ‘Autobahn’ and ‘Scout’s Guide to the Zombie Apocalypse’

106

709 ‘Spectre’ and ‘The Peanuts Movie’

107

710 ‘Rings’ and ‘By The Sea’

108

Farewell Tom Cruise

109

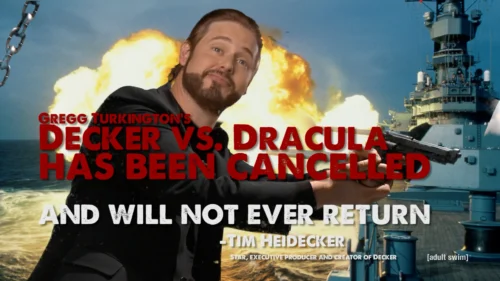

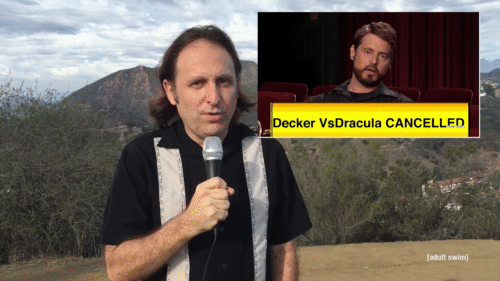

Decker Vs. Dracula: Behind the Truth

110

Decker Versus Dracula: The Lost Works

111

4th Annual On Cinema Oscar Special

112

Empty Bottle

113

Oscar Medley (4th Annual On Cinema Oscar Special)

114

DEKKAR – Empty Bottle (Live At the Bourbon and Boot) New Orleans Style

115

Dekkar: Trigger Everything

116

801 ‘Star Trek Beyond’ and ‘Hillary’s America’

117

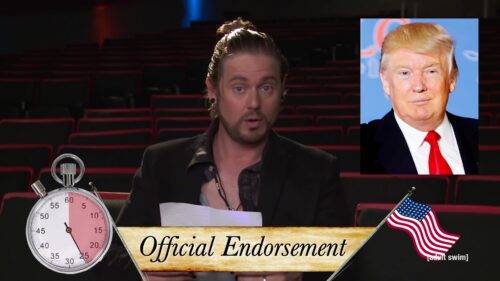

Official Endorsement

118

802 ‘Cafe Society’ and ‘Jason Bourne’

119

803 ‘Suicide Squad’ and ‘Nine Lives’

120

804 ‘Sausage Party’ and ‘Pete’s Dragon’

121

805 ‘Ben-Hur’ and ‘Kubo and the Two Strings’

122

806 ‘Hands of Stone’ and ‘Mechanic: Resurrection’

123

807 ‘Solace’ and ‘The Light Between Oceans’

124

808 ‘Sully’ and ‘When The Bough Breaks’

125

809 ‘Snowden’ and ‘Bridget Jones’s Baby’

126

810 ‘The Magnificent Seven’ and ‘Storks’

127

Our Cinema Oscar Special

128

901 ‘Kong: Skull Island’ and ‘The Wall’

129

902 ‘T2 Trainspotting’ and ‘The Belko Experiment’

130

903 ‘Chips’ and ‘Power Rangers’

131

904 ‘Boss Baby’ and ‘The Zookeeper’s Wife’

132

905 ‘Smurfs: The Lost Village’ and ‘The Case for Christ’

133

906 ‘Fast and Furious 8’ and ‘The Lost City of Z’

134

907 Unforgettable’, ‘Animal Crackers’, and ‘Born in China’

135

908 ‘The Circle’ and ‘How to be a Latin Lover’

136

‘Guardians of the Gallery Vol. 2’ and ‘The Lovers’

137

909 ‘Guardians of the Galaxy Vol. 2’ and ‘The Lovers’

138

910 ‘King Arthur’ and ‘Snatched’

139

Dekkar (Featuring Axiom): You Asked For a Hero

140

The Trial of Tim Heidecker

141

The Trial of Tim Heidecker – Day 1

142

The Trial of Tim Heidecker – Day 2

143

The Trial of Tim Heidecker – Day 3

144

The Trial of Tim Heidecker – Day 4

145

The Trial of Tim Heidecker – Day 5

146

The Trial of Tim Heidecker – Verdict

147

The Trial of Tim Heidecker (Complete)

148

5th Annual On Cinema Oscar Special

149

1001 ‘Pacific Rim Uprising’ and ‘Sherlock Gnomes’

150

1002 ‘Ready Player One’ and ‘Tyler Perry’s Acrimony’

151

1003 ‘Chappaquiddick’ and ‘You Were Never Really Here’

152

1004 ‘Sgt. Stubby: An American Hero’ and ‘Overboard’

153

1005 ‘Rampage’ and ‘Super Troopers 2’

154

1006 ‘Traffik’

155

1007 ‘Avengers: Infinity War’

156

1008 ‘Life of the Party’ and ‘Breaking In’

157

1009 ‘Deadpool 2’ and ‘Show Dogs’

158

1010 ‘Solo: A Star Wars Movie’

159

The New On Cinema Oscar Special

160

DKR – Empty Bottle 3.0

161

1101 ‘Abominable’ & ‘Judy’

162

1102 ‘Joker’ & ‘The Current War’

163

Mister America

164

1103 ‘Gemini Man’ & ‘The Addams Family’

165

1104 ‘Mister America’ & ‘Maleficent: Mistress of Evil’

166

1105 ‘Black and Blue’ & ‘The Last Full Measure’

167

1106 ‘Terminator: Dark Fate’ & ‘Motherless Brooklyn’

168

1107 ‘Midway’ & ‘Arctic Dogs’

169

1108 ‘Charlie’s Angels’ & ‘The Report’

170

1109 On Cinema Debt Forgiveness Special

171

1110 ‘Knives Out’ & ‘Queen & Slim’

172

7th Annual On Cinema Oscar Special

173

8th Annual On Cinema Oscar Special

174

Back In The Hei Life

175

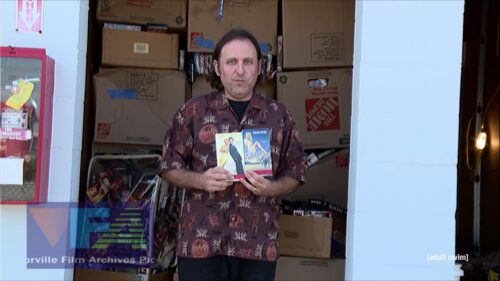

VFA Acquisition Announcement

176

2021 On Cinema Summer Preview (Audio Only)

177

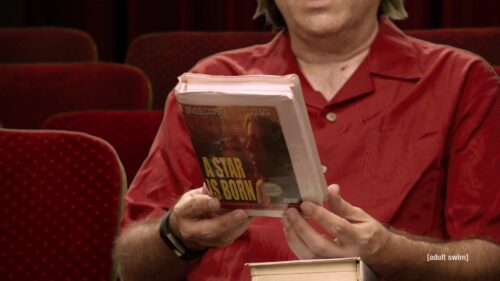

Gregg Turkington’s Classic Movie Time – Episode 1

178

Gregg Turkington’s Classic Movie Time – Episode 2

179

On Cinema Live

180

Gregg Turkington’s Classic Movie Time – Episode 3

181

Rock House – Heilot

182

Introducing HEI Points

183

1201 ‘Mass’ & ‘No Time To Die’

184

1202 ‘Halloween Kills’ & ‘The Last Duel’

185

1203 ‘Dune’ & ‘The French Dispatch’

186

1204 ‘Last Night in Soho’ & ‘Antlers’

187

1205 ‘The Harder They Fall’ & ‘Eternals’

188

1206 ‘Red Notice’ & ‘Ghostbusters: Afterlife’

189

1207 ‘Top Gun: Maverick’ & ‘King Richard’

190

Xposed – Heilot

191

Deck of Cards – Teaser

192

Xposed – Episode 1

193

1208 ‘House of Gucci’ & ‘Resident Evil: Welcome to Raccoon City’

194

Popcorn Shuffle – Heilot

195

1209 ‘Nightmare Alley’

196

Mark’s Cavalcade of Characters – Heilot

197

1210 ‘American Underdog’

198

D4 – My Angel (Official Video)

199

Away in a Manger

200

Xposed – Episode 2

201

Wendy Kerby Valentine’s Day Special Promo

202

Xposed – Episode 3

203

Xposed – Episode 4

204

The Wendy Kerby Valentine’s Day Special

205

Wendy Kerby Valentines Day Special

206

Xposed – Episode 5

207

9th Annual On Cinema Oscar Special

208

Oscar Medley (9th Annual On Cinema Oscar Special)

209

Dekkar (Volume 1)

210

Dekkar (Volume 2)

211

2022 On Cinema Summer Preview (Audio Only)

212

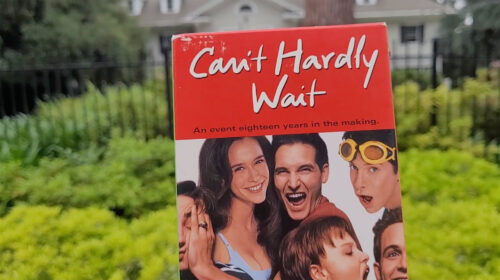

Popcorn Classics (Part 1)

213

Deck of Cards – Teaser Trailer

214

Popcorn Classics (Part 2)

215

Deck of Cards

216

Behind The Cards

217

Deck of Cards: What Went Wrong?

218

1301 ‘Prey for the Devil’

219

1302 ‘My Policeman’ & ‘Enola Holmes 2’

220

1303 ‘Black Panther: Wakanda Forever’ & ‘The Fabelmans’

221

1304 ‘The Menu’ & ‘The Santa Clauses’

222

1305 ‘Devotion’ & ‘Strange World’

223

1306 ‘Scrooge: A Christmas Carol’ & ‘Violent Night’

224

1307 ‘Pinocchio’ & ‘Something from Tiffany’s’

225

1308 ‘A Man Called Otto’ & ‘Avatar: The Way of Water’

226

1309 On Cinema And More In The Morning Christmas Special

227

1310 ‘Alice, Darling’

228

1311 ‘M3GAN’ & ‘The Amazing Maurice’

229

Season 13 Wrap Party

230

An Important Message From Actor Joe Estevez

231

10th Annual On Cinema Oscar Special

232

Pinocchio Through The Years

233

VFA Expert Hollywood Tour

234

2023 On Cinema Summer Movie Recap (Audio Only)

235

Our Cinema Movie Birthdays – Episode 1

236

Our Cinema Movie Birthdays – Episode 2

237

Our Cinema Movie Birthdays – Episode 3

238

Our Cinema Movie Birthdays – Episode 4

239

Case Closed: Breach of Trust

240

1401 ‘Night Swim’ & ‘Weak Layers’

241

On Cinema On Demand Encore – Episode 1

242

1402 ‘The Book of Clarence’ & ‘Mean Girls’

243

On Cinema On Demand Encore – Episode 2

244

1403 ‘Founders Day’ & ‘I.S.S.’

245

On Cinema On Demand Encore – Episode 3

246

Announcing AmatoCon

247

1404 ‘Sometimes I Think About Dying’ & ‘The Underdoggs’

248

On Cinema On Demand Encore – Episode 4

249

1405 ‘Argylle’ & ‘The Promised Land’

250

Ride With The Devil

251

1406 ‘It Ends With Us’

252

1407 ‘Madame Web’ & ‘Bob Marley: One Love’

253

1408 ‘Drive-Away Dolls’

254

1409 ‘Dune: Part Two’

255

1410 ‘Kung Fu Panda 4’ & ‘Imaginary’

256

11th Annual On Cinema Oscar Special

257

Season 14 Wrap Party

258

Amato Crime Family Indictment Announcement

259

District Attorney of San Bernardino Press Conference – 05/02/2024

260

Kalli Amato Presents: The Joey Patrocelli Interview

261

The First Ever Film Buff’s Movie Scavenger Hunt On-Line Event Teaser

262

Tim and Toni’s Guide To Couple’s Massage

263

Live: Tim and Toni’s Guide To Couple’s Massage

264

2024 On Cinema Summer Movie Recap (Audio Only)

265

2024 On Cinema Summer Movie Recap (Audio Only)

266

1501 ‘Babygirl’ & ‘Nosferatu’

267

1502 ‘The Damned’ & ‘Wallace & Gromit: Vengeance Most Fowl’

268

1503 ‘Den of Thieves 2: Pantera’ & ‘Better Man’

269

1504 ‘One of Them Days’ & ‘Wolf Man’

270

1505 ‘Flight Risk’ & ‘Inheritance’

271

1506 ‘Valiant One’ & ‘Dog Man’

272

1507 ‘Love Hurts’ & ‘Heart Eyes’

273

1508 ‘Captain America: Brave New World’ & ‘Paddington in Peru’

274

1509 ‘The Gorge’ & ‘The Monkey’

275

’84 Charing Cross Road’

276

12th Annual On Cinema Oscar Special

277

Witches and Wizards: A Hollywood Tribute

278

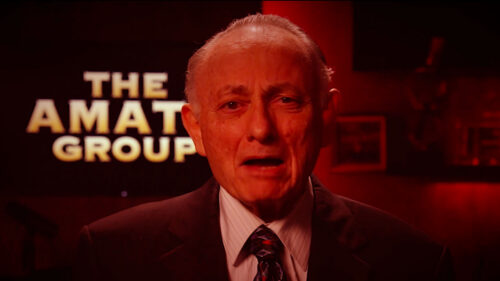

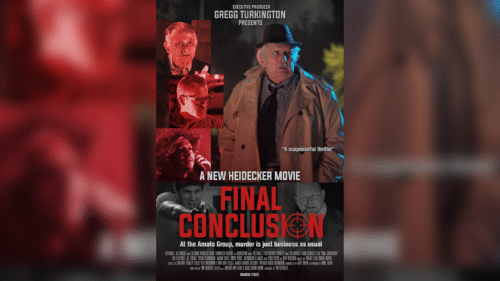

Final Conclusion

279

Summer Movie Roundup 2025

280

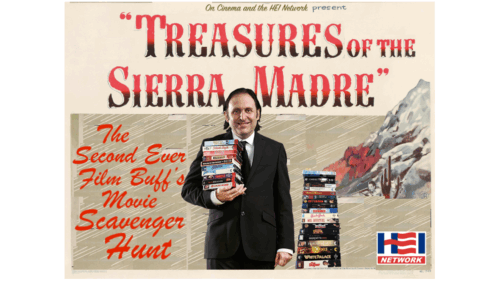

The Second Ever Film Buff’s Movie Scavenger Hunt On-Line Event: Promo

281

1601 ‘The Strangers: Chapter 2’ and ‘Gabby’s Dollhouse: The Movie’

282

1602 ‘Roofman’ and ‘Kiss of the Spider Woman’

283

1603 ‘Black Phone 2’ and ‘Blue Moon’

284

1604 ‘Springsteen: Deliver Me from Nowhere’, ‘Regretting You’, and ‘The Watchers’

285

1605 ‘Bugonia’ and ‘Chainsaw Man – The Movie: Reze Arc’

286

1606 ‘The Running Man’ and ‘Predator: Badlands’

287

VFA Presents: DVD to VHS Conversion

288

1607 ‘Now You See Me: Now You Don’t’ and ‘Jay Kelly’

289

1608 ‘Wicked: For Good’

290

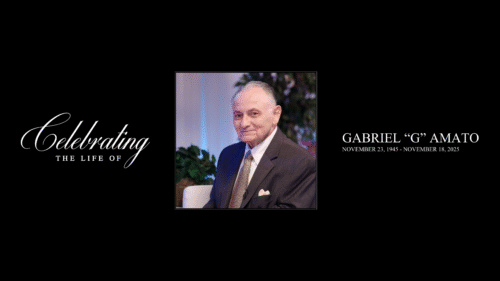

1609 Celebrating the Life of Gabriel “G” Amato

291

1610 ‘Hamnet’ and ‘Ella McCay’

292

New Heidecker – Daddy’s Gone (Music Video)

293

13th Annual On Cinema Oscar Special

294

Dootle Dots On Tour